Why does entropy increase in closed systems?

The natural inclination of the physical world seems to be towards disarray, a slow, inexorable slide toward a state of uniformity where useful energy is spread thin. This phenomenon is encapsulated by the Second Law of Thermodynamics, which dictates that the total entropy of an isolated system can never decrease over time; it must either increase or, in the most idealized circumstances, remain constant. [5][7] When we discuss closed systems, we are often referring to something that exchanges energy but not mass with its surroundings, but the strict entropic law applies most cleanly to the isolated system—a system with no interaction whatsoever, like the entire universe. [6][7] Understanding why this increase happens requires moving past the simple notion of "messiness" and delving into the realm of probability and statistics.

# Law Stated

The Second Law of Thermodynamics provides a fundamental arrow for time. [5] If you watch a video of ice melting in a glass of water, you know immediately which direction is forward in time; the reverse—water spontaneously forming ice cubes while the glass of water warms up—simply doesn't happen in reality. [1] Entropy, often symbolized as , is the measure that governs this directionality. [7]

For a process to occur spontaneously in an isolated system, the entropy of that system must increase. [5] This is the key takeaway. The universe, being the ultimate isolated system, is constantly increasing its total entropy. [5] While entropy can decrease in a non-isolated system—for example, organizing a messy room or building a complex machine—this local decrease in order is always accompanied by a greater increase in entropy somewhere else, usually in the environment that supplied the energy for that organization. [4] The Second Law does not forbid local decreases in entropy; it forbids a net decrease in the total entropy of the system plus its surroundings. [7]

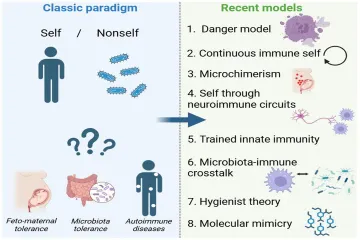

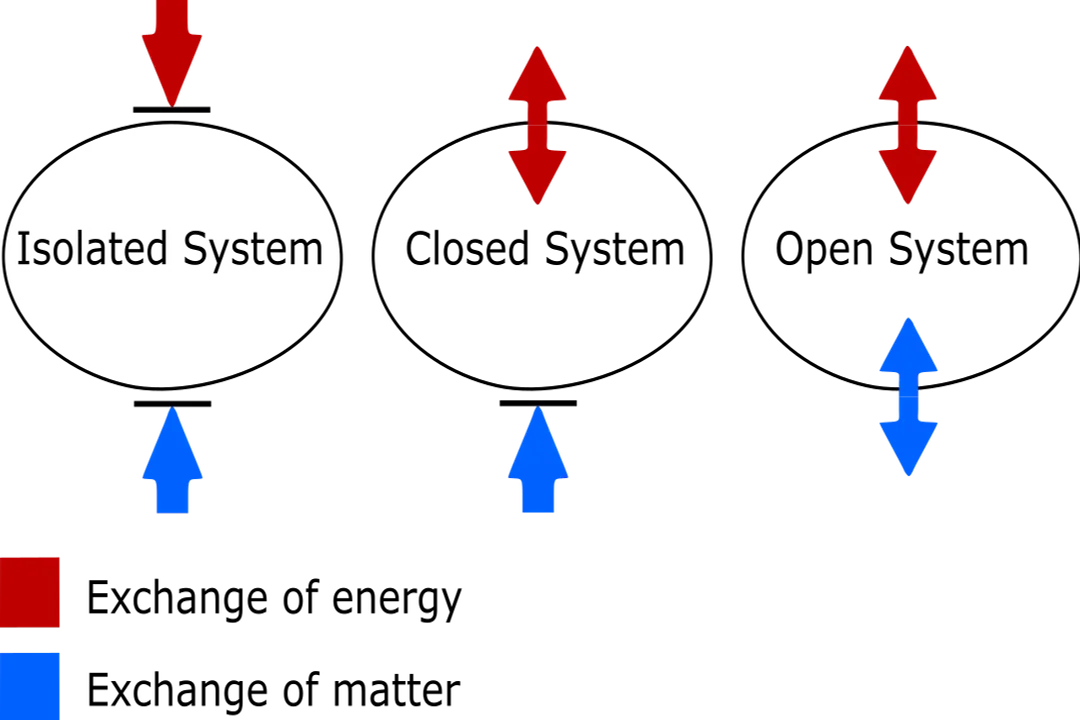

# System Boundaries

It is crucial to differentiate between the types of systems involved when discussing this law, as imprecise language often causes confusion. [7]

- Isolated System: No exchange of energy or mass with the surroundings. This is the environment where the entropy increase is absolute (). [6][5]

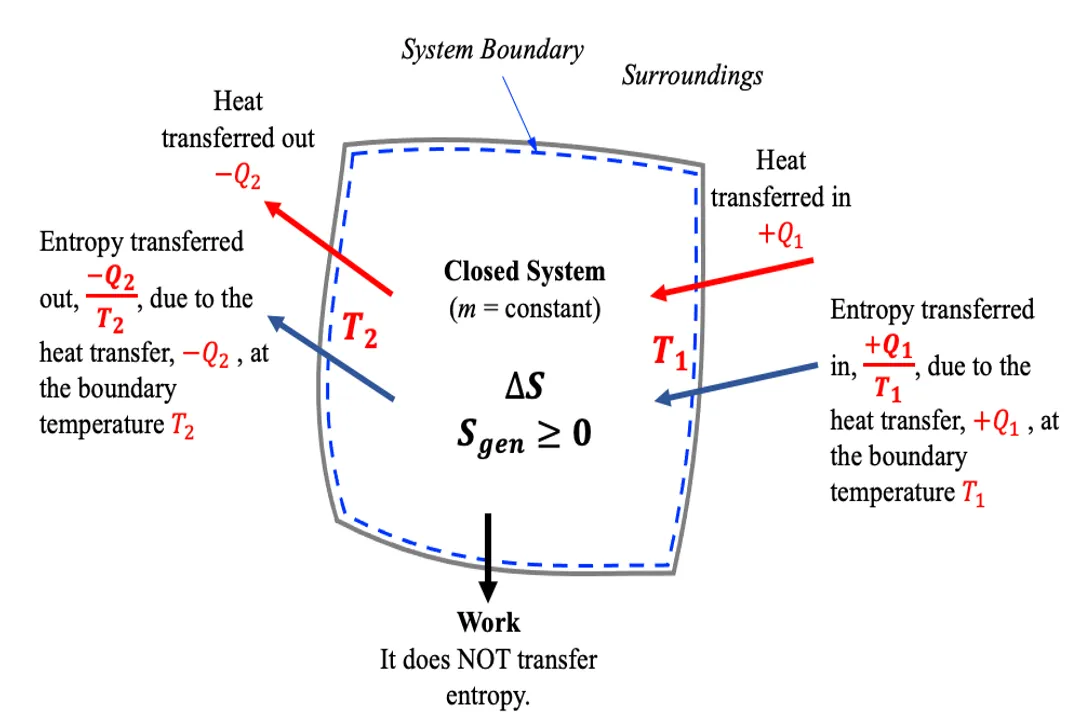

- Closed System: Exchanges energy but not mass. The entropy change for the system itself, , plus the entropy change of the surroundings, , must result in a total entropy change () that is zero or positive (). [7]

- Open System: Exchanges both energy and mass (like a living cell or the Earth, which receives energy from the Sun). [4] In open systems, entropy can decrease locally because the system is drawing in highly ordered, low-entropy energy (like sunlight) and exporting high-entropy energy (like waste heat). [4]

When physicists state that entropy always increases, they are referring to the isolated system—the universe as a whole. Any local ordering, like the formation of a star or the growth of a human being, is an example of a temporary, statistically improbable configuration achieved at the expense of a much larger, irreversible increase in disorder elsewhere. [1]

# Microscopic View

To truly grasp the increase, we must look at entropy not just as a measure of disorder, but as a measure of probability through the lens of statistical mechanics. [1] Entropy () is mathematically related to the number of possible microscopic arrangements, or microstates (), that correspond to the system's observable macroscopic state (temperature, pressure, volume). [1] The relationship is given by Boltzmann's formula: , where is the Boltzmann constant. [1]

Consider a box divided in half, with all gas molecules confined to the left side (a highly ordered, low-entropy state). There is only one specific, very structured way for all those molecules to be on the left side. If you remove the divider, the molecules will begin to spread out. They move randomly, governed by their kinetic energy. Statistically, there are an astronomical number of ways for those molecules to be distributed throughout the entire box (high entropy) compared to the few ways they can all remain perfectly segregated on the left (low entropy). [1]

When the system evolves, it simply follows the path of least resistance in terms of probability. The system does not seek disorder; it merely explores the configuration space available to it, and since the disordered states vastly outnumber the ordered ones, the system is overwhelmingly likely to end up in a high-entropy state. [1] If you let the gas redistribute, it is not because some inherent law forces it to the right side; it is because there are countless more ways for the molecules to be scattered randomly than for them to stay clumped together. [1]

# Probability's Role

The increase in entropy is fundamentally a statistical inevitability, much like tossing a billion coins and expecting to get nearly fifty percent heads, not zero or all heads. [1] The difference is that in physics, the number of "coins" (molecules/energy packets) is immense, making the statistical certainty practically absolute.

Imagine a simple system of four gas particles in a container, partitioned. The ordered state (all four on the left) is one specific arrangement. The most probable, disordered state (two on each side) has six different arrangements that result in that macrostate. [1] If we scale this up to Avogadro's number ( particles), the disparity between the number of ways to achieve order versus disorder becomes essentially infinite. [1]

This leads to an interesting conceptual separation. The movement toward higher entropy is not driven by a force pushing things apart, but by the sheer combinatorial explosion of possibilities once constraints are removed. If you have a perfectly mixed cup of coffee and cream, the molecules are distributed in the highest possible state of microscopic configurations. For that cream to spontaneously unmix itself and perfectly reform into a droplet on the surface would require every single molecule involved to reverse its precise trajectory simultaneously—an event so statistically improbable it would effectively never happen within the age of the universe. [1]

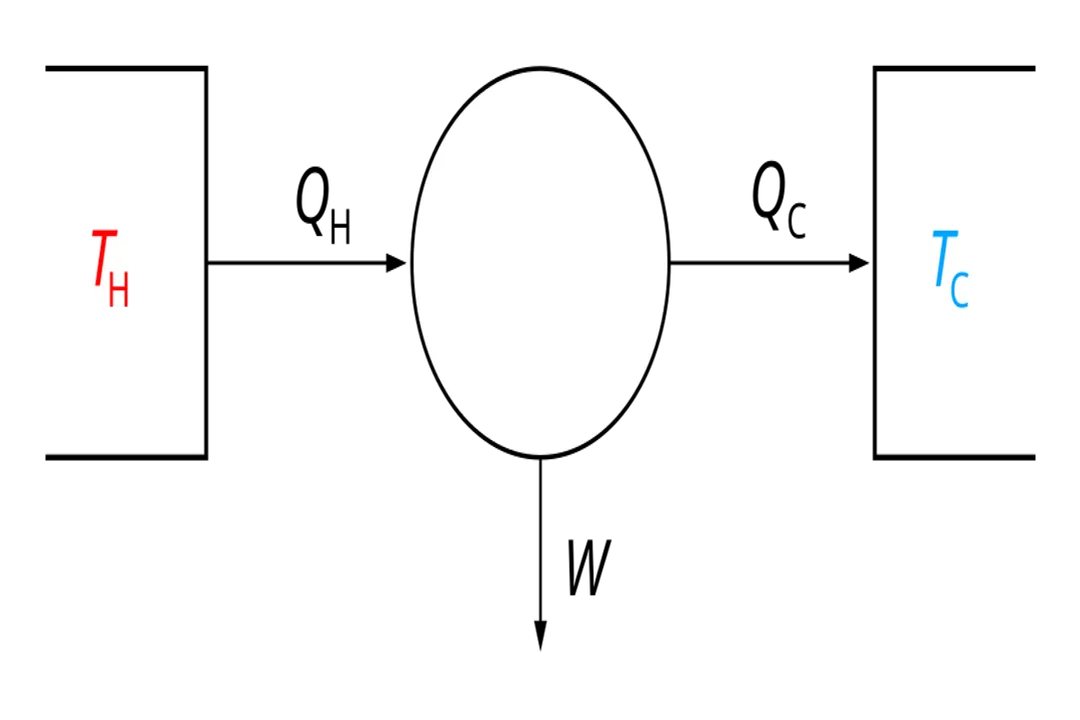

When thinking about energy, the same logic applies. Heat always flows from hot objects to cold objects because this process increases the number of ways the total energy can be distributed between the two bodies, leading to a higher combined entropy. [1]

One practical way to visualize this is to think about the usefulness of energy. A lump of coal represents energy concentrated in a specific chemical arrangement (low entropy, high potential for work). Once burned, that energy disperses as low-temperature heat into the vastness of the room and the atmosphere (high entropy, very little potential for work). The total energy is conserved (First Law), but the quality or availability of that energy to perform work has decreased dramatically because it has spread out into so many more possible states. [7]

# Reversibility Limit

The Second Law explicitly allows for under certain, highly specific, and usually theoretical conditions: the reversible process. [5][7] A reversible process is an idealized quasi-static process where the system passes through a sequence of equilibrium states without any net entropy generation within the system or surroundings. [7]

In the context of a closed system, if a process is perfectly reversible, the net change in entropy is zero, meaning the initial and final states possess the same number of accessible microstates, relative to the process pathway. [5] Such processes are useful for thermodynamic calculations but do not actually occur in nature, as every real-world event involves friction, turbulence, or irreversible heat transfer, generating entropy. [7]

This leads to an important point for engineers and chemists: in any real closed system undergoing an irreversible change, the entropy must increase. For instance, if you mix two gases in a closed container by removing a partition, the mixing is irreversible because the process is driven by the statistical likelihood of occupation, and the separated state is incredibly improbable. [1] You cannot rely on the random motion of molecules to perfectly re-segregate them again.

# Cosmic Scale

While we can easily clean our desks or freeze water in our freezers (both examples of local entropy decreases in open/non-isolated systems), the net balance tips toward disorder for the entire system we consider. [4] Consider the Earth. It is functionally a closed system regarding mass, but it is clearly open to energy flow from the Sun. [4] Life exists here, creating highly ordered structures.

However, the Sun is continuously converting mass into energy, which radiates outward. The Sun's entropy is increasing massively due to fusion and radiative cooling. The low-entropy energy arriving at Earth (visible light) is converted into higher-entropy energy (waste heat radiated back into space). [4] The local decrease in entropy on Earth (life flourishing, complex cities forming) is paid for by a vastly larger increase in entropy generated by the Sun in the process of sustaining that life. [4] If we consider the Earth-Sun system as our isolated system, the total entropy increases, satisfying the Second Law. [4]

If one were to perform a grand accounting for the entire universe, which is the ultimate isolated system, the tally of disorder always trends upward. This unidirectional increase is what provides the "arrow of time". [5] It means that while we can engineer order locally, we are always participating in a larger, irreversible process of energy dispersal across the cosmos. This fundamental drive towards maximum probability ensures that the structure we observe today—the stars, the galaxies, the chemistry of life—is a temporary, transient state waiting for the statistical tide to sweep it toward thermal equilibrium, or what is sometimes called the "heat death" of the universe, where all energy is uniformly distributed and no further work can be done. [1]

Related Questions

#Citations

The entropy of a closed system doesn't always increase - Big Think

Does entropy always have to increase in a closed system? - Reddit

Why do we say that entropy always increases in closed systems ...

Entropy

Second law of thermodynamics - Wikipedia

Why is Entropy Always Increasing in Closed Systems?

6.6 The second law of thermodynamics for closed systems

Does entropy always increase in a closed system? - Facebook

In closed systems, why must entropy increase with time?