precision articles

What fundamental role do Confidence Intervals (CIs) serve beyond summarizing findings with a point estimate?

What is the most frequently set level of confidence for estimation procedures?

What does stating 95% confidence primarily assert about the estimation procedure itself?

Which three main elements are required for the construction of a confidence interval?

Assuming constant sample size, how does increasing the desired confidence level affect the Margin of Error (MOE)?

How does a larger sample size generally impact the Standard Error and the resulting interval?

Which statement reflects a common, technically inaccurate interpretation of a single realized 95% CI?

If the 95% CI for the difference in conversion rates between two designs spans zero (e.g., -0.5% to +1.5%), what is the conclusion regarding the difference?

If the 95% CI for the difference in recovery times is (2.1 days to 4.5 days), what is the appropriate decision regarding a null hypothesis of zero difference?

Why is it mathematically impossible to construct a confidence interval that guarantees 100% coverage of the true population parameter?

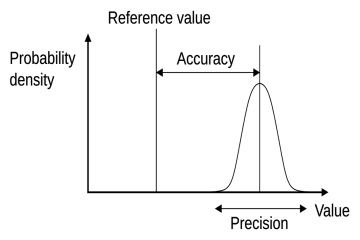

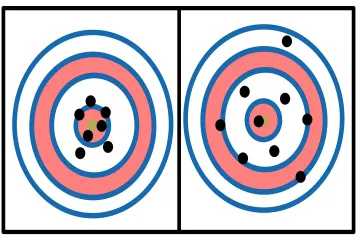

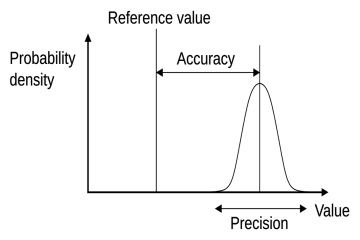

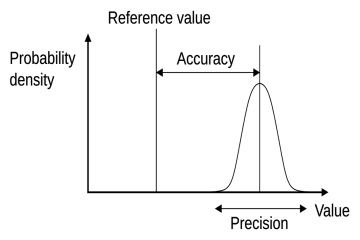

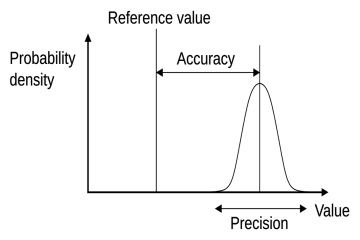

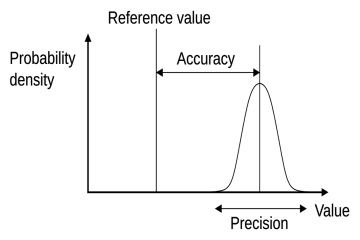

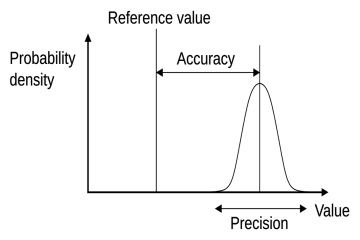

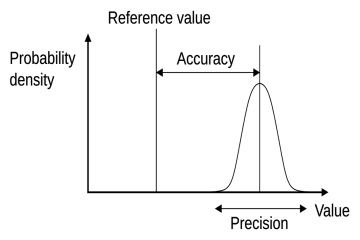

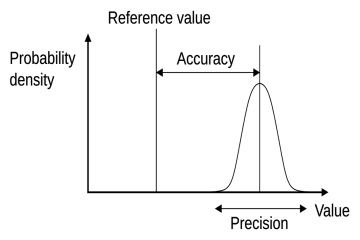

What does precision primarily measure regarding repeated measurements?

Accuracy in measurement fundamentally describes closeness to what value?

What is the relationship between high accuracy and bias?

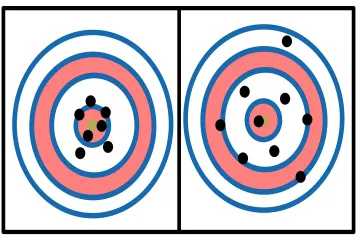

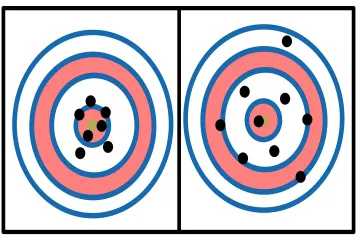

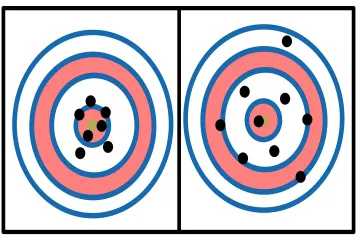

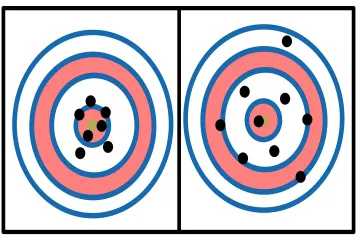

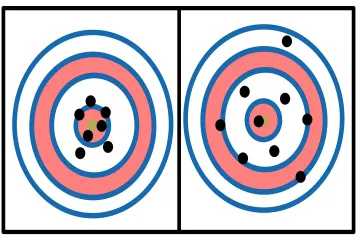

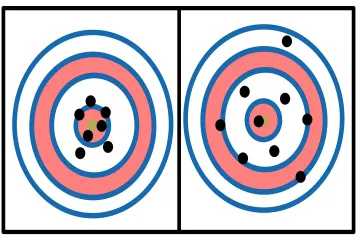

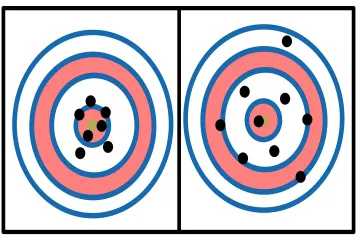

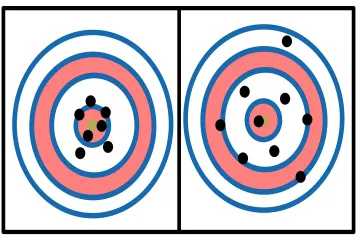

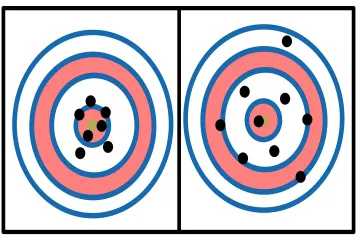

In the target analogy, what scenario represents low accuracy but high precision?

What type of error is typically attributed to measurements showing poor precision?

How is trueness technically defined in relation to systematic error components?

Which factor is a common cause of systematic error, leading to low accuracy?

If struggling with consistency across operational measurements, what procedural improvement should be prioritized first?

What distinguishes resolution from precision?

What metric integrates both the random scatter (imprecision) and the estimated systematic offset (bias) into a single budget?

What concept describes how close a measurement is to the true or accepted value of what is being measured?

Which type of error consistently shifts measurements in the same direction, regardless of the readings' closeness to each other?

What causes measurements to fluctuate unpredictably around the true value and primarily limits precision?

How can the negative effect of random errors on a final reported result typically be minimized?

What term defines the smallest increment an instrument is physically capable of detecting or displaying?

What fundamental law of physics imposes an absolute limit on simultaneously knowing a particle's position and momentum with perfect accuracy?

When reading a graduated cylinder, what human error occurs if the observer's eye is not positioned directly perpendicular to the liquid level?

If darts land in a tight cluster far away from the center of a target, what characteristic do the throws demonstrate?

In engineering contexts, what term defines the acceptable range of variation permitted for a component to still be deemed functional?